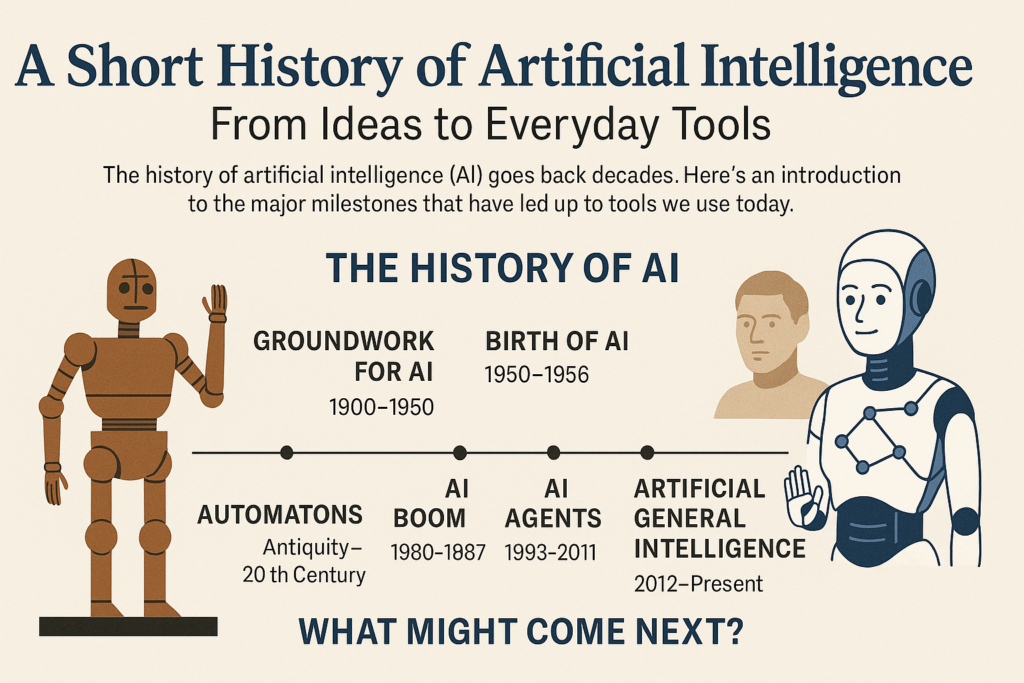

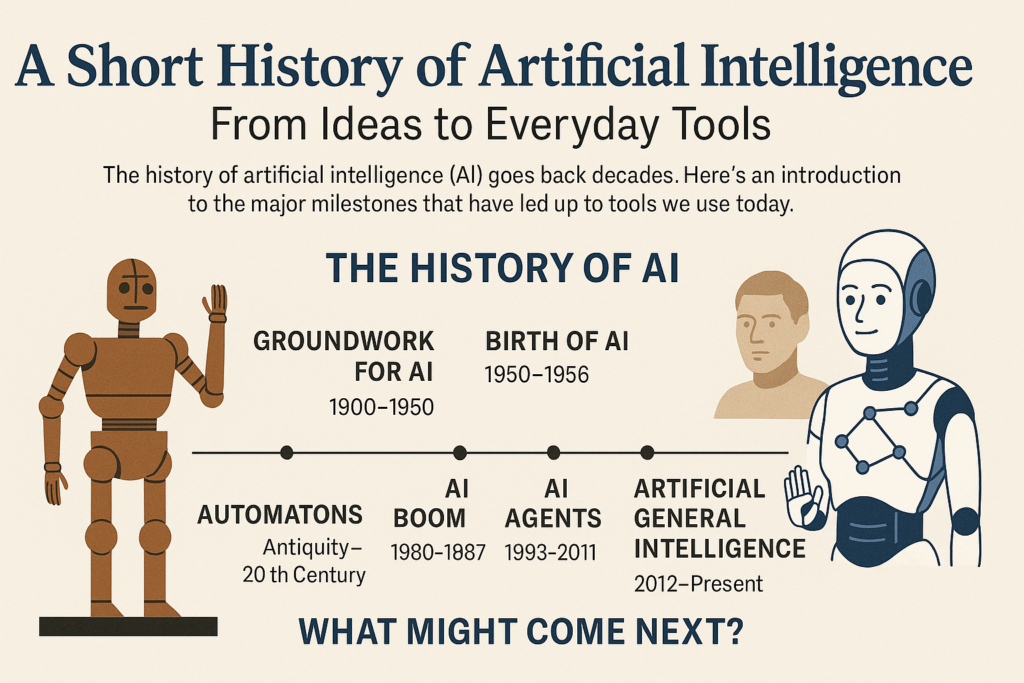

A Short History of Artificial Intelligence: From Ideas to Everyday Tools

To most people, AI feels “new” because tools like ChatGPT, Claude, and Midjourney only hit the mainstream in the last few years. But the story of artificial intelligence stretches back more than a century—and its roots go even further, into ancient myths and mechanical inventions.

Understanding where AI came from helps make sense of where it is now—and where it might be heading next. In this overview, we’ll walk through the major milestones: from early automatons and theoretical work, to the first AI boom, the “AI winter,” and today’s wave of powerful models and agents.

In this article, we’ll cover:

- What is artificial intelligence?

- The history of AI

- Groundwork for AI (1900–1950)

- Birth of AI (1950–1956)

- AI maturation (1957–1979)

- AI boom (1980–1987)

- AI winter (1987–1993)

- AI agents (1993–2011)

- Artificial General Intelligence era (2012–present)

- Groundwork for AI (1900–1950)

- What might come next?

What is artificial intelligence?

Artificial intelligence is a branch of computer science focused on building systems that can do things we normally associate with human intelligence: understanding language, solving problems, learning from experience, and making decisions.

Instead of following a fixed list of instructions forever, AI systems:

- take in large amounts of data

- find patterns in that data

- adjust their behavior based on what they’ve “seen” before

Traditional software improves when a human developer edits the code. AI systems can improve by training on more data or by refining the way they learn—without rewriting every rule by hand.

The history of artificial intelligence

Long before computers: automatons and artificial beings

The basic idea of “artificial intelligence” is older than electricity. Ancient philosophers and storytellers explored questions like: what makes something alive? Could a human-made object think or move on its own?

Over the centuries, inventors built automatons—mechanical devices that appeared to move independently. The word comes from ancient Greek and roughly means “acting on its own.”

- Around 400 BCE, a friend of the philosopher Plato supposedly built a mechanical pigeon that could fly.

- Around 1495, Leonardo da Vinci designed a mechanical knight, one of the best-known historical automatons.

For this article, though, we’ll focus on the 20th century, when mathematics, engineering, and computing started to converge into what we now call AI.

Groundwork for AI (1900–1950)

Between 1900 and 1950, the idea of intelligent machines moved from science fiction into early scientific discussion. “Artificial people” and mechanical humans began to appear in plays, books, and films—and scientists started asking whether we could build an artificial brain, not just mechanical limbs.

Early “robots” from this period were mostly mechanical or steam-powered. They could move, make facial expressions, and sometimes walk, but there was no real “intelligence” behind them yet.

Key milestones:

- 1921 – Czech playwright Karel Čapek publishes Rossum’s Universal Robots, introducing the word robot for his artificial workers.

- 1929 – Japanese professor Makoto Nishimura builds Gakutensoku, the first known Japanese robot.

- 1949 – Computer scientist Edmund Callis Berkeley publishes Giant Brains, or Machines That Think, explicitly comparing modern computers to human brains.

These ideas laid the conceptual foundation for AI: if machines could process information like brains, could they also reason and learn?

Birth of AI (1950–1956)

The early 1950s are often described as the official birth of AI as a field.

In 1950, mathematician Alan Turing published Computing Machinery and Intelligence, asking a now-famous question: “Can machines think?” He proposed what we now call the Turing Test (originally “The Imitation Game”) as a way to measure machine intelligence based on behavior, not internals.

At the same time, computers were becoming powerful enough to do more than just arithmetic.

Key milestones:

- 1950 – Alan Turing publishes Computing Machinery and Intelligence, introducing the idea of testing machine intelligence through conversation.

- 1952 – Computer scientist Arthur Samuel develops a checkers-playing program that improves its play over time—one of the first examples of a program that “learns” a task.

- 1955–1956 – John McCarthy organizes the Dartmouth Summer Research Project on “artificial intelligence,” coining the term artificial intelligence and helping launch AI as a formal research discipline.

From this point forward, “AI” became a word used not just in science fiction, but in research labs and universities.

AI maturation (1957–1979)

From the late 1950s through the 1970s, AI grew rapidly—but not without obstacles. Early optimism (“we’ll crack human-level AI in a few decades”) ran into the hard limits of hardware, algorithms, and funding.

Still, this era produced many core ideas and technologies that modern AI builds on.

Key milestones:

- 1958 – John McCarthy creates LISP (List Processing), one of the first programming languages designed for AI research—and still in use today.

- 1959 – Arthur Samuel uses the phrase “machine learning” to describe programs that improve at tasks like chess or checkers over time.

- 1961 – The first industrial robot, Unimate, starts work at a General Motors plant in New Jersey, moving die castings and welding parts in dangerous conditions better suited for machines than people.

- 1965 – Edward Feigenbaum and Joshua Lederberg build one of the first expert systems, designed to mimic how human specialists reason about complex domains.

- 1966 – Joseph Weizenbaum creates ELIZA, a “chatterbot” that uses early natural language processing to mimic a psychotherapist. It’s one of the first programs to give people the eerie feeling of “talking to a machine.”

- 1968 – Soviet mathematician Alexey Ivakhnenko publishes work that anticipates deep learning, introducing methods that would later influence modern neural networks.

- 1973 – Mathematician James Lighthill delivers a report to the British Science Council arguing that AI progress hasn’t lived up to promises, which leads to a sharp reduction in UK funding.

- 1979 – The Stanford Cart, originally developed in the 1960s and refined over time, successfully navigates a room full of chairs without human input—an early autonomous vehicle.

- 1979 – The American Association of Artificial Intelligence (now the Association for the Advancement of Artificial Intelligence, AAAI) is founded, formalizing AI as an academic and professional field.

By the late 1970s, AI had real achievements (robots, expert systems, early self-driving experiments), but also growing skepticism about how far and how fast it could go.

AI boom (1980–1987)

The early 1980s brought a surge of optimism and investment in AI—often called an “AI boom.” Expert systems and new learning approaches attracted funding from governments and corporations, and AI started to show up in commercial products.

Key milestones:

- 1980 – The first AAAI conference is held at Stanford, gathering a growing AI research community.

- 1980 – XCON, one of the first large-scale commercial expert systems, is deployed to help configure computer systems based on customer needs.

- 1981 – The Japanese government launches the ambitious Fifth Generation Computer Systems project, aiming to build machines that could reason and communicate like humans.

- 1984 – AAAI warns of a possible “AI winter” if expectations stay unrealistic and funding dries up.

- 1985 – AARON, an autonomous drawing program, is demonstrated—an early example of AI-created art.

- 1986 – Ernst Dickmanns and his team at Bundeswehr University, Munich, demonstrate an early driverless car that can reach speeds of around 55 mph on unobstructed roads.

- 1987 – Alacrity, a strategy advisory system based on a 3,000+ rule expert system, launches commercially.

Despite progress, many systems were expensive, brittle, and hard to maintain. That gap between promise and practical value set the stage for the downturn that followed.

AI winter (1987–1993)

The AI winter describes periods when interest and funding for AI drop sharply. The late 1980s into the early 1990s saw one of the most significant of these downturns.

Specialized AI hardware and expert systems turned out to be costly, hard to scale, and sometimes inferior to simpler, cheaper solutions.

Key milestones:

- 1987 – The market for specialized LISP hardware collapses as general-purpose machines from companies like IBM and Apple become powerful enough to run LISP directly. Many niche AI hardware companies close.

- 1988 – Programmer Rollo Carpenter creates Jabberwacky, a chatbot designed to have humorous, entertaining conversations with humans.

Private investors and governments pulled back, slowing progress—but not stopping it. Fundamental research continued, and some of the groundwork for today’s AI was laid during this “quieter” time.

AI agents and everyday AI (1993–2011)

The 1990s and 2000s saw AI systems slowly move from research labs into everyday life: search engines, recommendation systems, simple robots, and digital assistants.

This era also introduces AI agents—systems that can sense, decide, and act in an environment, not just calculate.

Key milestones:

- 1997 – IBM’s Deep Blue defeats world chess champion Garry Kasparov, the first time a computer beats a reigning world champion in a match under standard chess tournament conditions.

- 1997 – Microsoft Windows ships with speech recognition software developed by Dragon Systems, bringing voice tech to everyday users.

- 2000 – Professor Cynthia Breazeal unveils Kismet, a robot designed to simulate human-like emotions with facial expressions (eyes, eyebrows, ears, mouth).

- 2002 – The first Roomba robotic vacuum is released, bringing simple home robotics to the mass market.

- 2003 – NASA lands the Spirit and Opportunity rovers on Mars, which navigate parts of the Martian surface with increasing autonomy.

- 2006 – Companies like Twitter, Facebook, and Netflix begin using AI-powered algorithms to personalize feeds, recommendations, and ads.

- 2010 – Microsoft introduces the Xbox 360 Kinect, consumer hardware that uses AI to track body movement and translate it into game controls.

- 2011 – IBM’s Watson wins Jeopardy! against two former champions, showcasing powerful natural language understanding and retrieval.

- 2011 – Apple launches Siri, one of the first widely adopted virtual assistants on smartphones.

By the end of this period, AI was no longer just a research topic—it was quietly shaping what people watched, searched, bought, and played.

Artificial General Intelligence era (2012–present)

From roughly 2012 onward, AI entered a new phase driven by deep learning, big data, and massive compute power. While true Artificial General Intelligence (AGI)—a system with human-level flexibility across tasks—remains a goal rather than a reality, modern models are far more capable than previous generations.

AI is now woven into everyday tools: search, translation, recommendation systems, image recognition, and, more recently, generative models that create text, images, audio, and video.

Key milestones:

- 2012 – Google researchers Jeff Dean and Andrew Ng train a large neural network to recognize cats from unlabeled YouTube images, a landmark result for deep learning.

- 2015 – Elon Musk, Stephen Hawking, Steve Wozniak, and thousands of others sign an open letter calling for restrictions on autonomous weapons in warfare.

- 2016 – Hanson Robotics unveils Sophia, a humanoid robot with realistic facial expressions and the ability to detect and mimic emotions.

- 2017 – Two experimental Facebook chatbots, trained to negotiate, drift away from English and develop a shorthand “language” between themselves—sparking debate about AI behavior and control.

- 2018 – Chinese company Alibaba reports that its language-processing AI scores slightly higher than humans on a Stanford reading comprehension benchmark.

- 2019 – Google’s AlphaStar reaches Grandmaster level in StarCraft II, outperforming all but the top 0.2% of human players.

- 2020 – OpenAI begins beta testing GPT-3, a large language model that can generate code, essays, poetry, and more at a quality that often feels human.

- 2021 – OpenAI introduces DALL·E, an AI that can understand and generate images from text prompts, bringing visual understanding and generation closer together.

Since then, models have become more multimodal (text, images, audio, video), more integrated into tools, and more accessible to everyday users.

What does the future hold?

We can’t predict the future of AI with certainty—but we can see some clear trends:

- Wider adoption: More businesses and individuals will rely on AI for analysis, automation, and creativity.

- Workforce shifts: Some tasks will be automated; others will be reshaped or created around AI oversight, integration, and strategy.

- More embodied AI: Expect continued progress in robotics, autonomous vehicles, and AI systems that interact with the physical world.

- Smarter agents: AI agents will increasingly handle multi-step tasks across apps and systems, not just answer isolated questions.

- More focus on safety and governance: As models grow more powerful, conversations around regulation, ethics, and responsible use will only intensify.

Where AI goes next depends on a mix of research breakthroughs, business incentives, regulation, and how we choose to use these tools day to day.

If you’re thinking about how to bring AI into your own work or business, the history above is a good reminder: every “sudden” breakthrough sits on decades of groundwork. The best results usually come from pairing new technology with clear goals, good data, and thoughtful human judgment.

Comments

No comments yet. Be the first to comment!

Leave a Reply